A nice to have thing in Google Translate

Recently, I was using this very useful service from Google, when something funny happen. The text of the site was in English, instead of that foreign language. Perfect. Then, something unexpected happened. But, let’s go back to the beginning…….

A city and a language

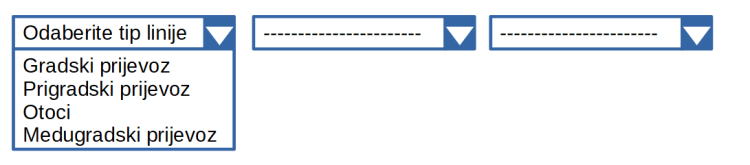

The town of Zadar is in Croatia. The official website is in the local language. no surprise here. In the middle of the page sit three option lists (commonly called drop boxes). Their content seems mysterious:

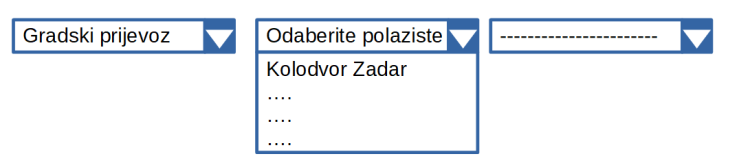

While the content of the list remains unknown to me, the choice is available, so why not? I pick up the first element in the list. The words tip linje, could they mean line type ? Maybe. After having made the choice, the second list changes with what looks like a selection of names:

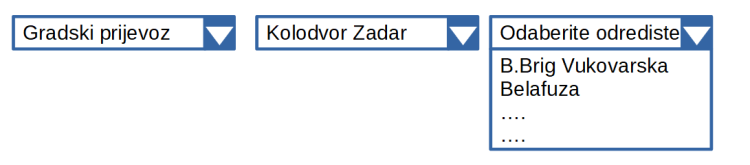

Now, the third list offers some options:

All this seems to be some sort of guidance. Too pity I don’t know the language. But, hey, there is a great tool that can translate the text into English. You know what I mean. Yes, Google, come to help!

Magic ….

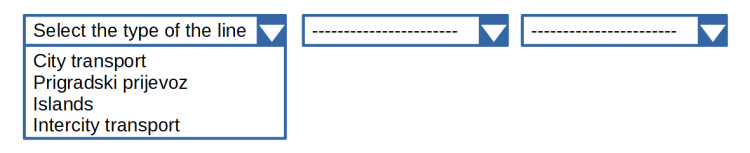

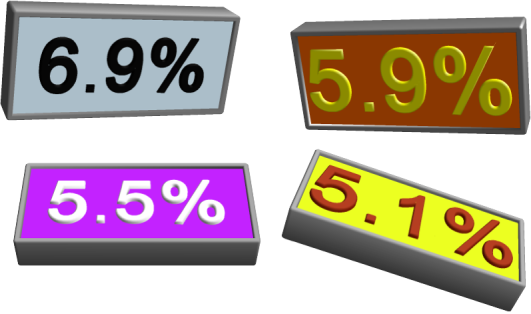

Quickly, I typed the address of the site and the magic happened. The unknown language (Croatian) changed to English. Too good to be true. The images were still in the local language, but this would be too complex a problem to solve, even for the giant of the giants. Anyway, see what I’m looking for: three lists of choices. Now, the content of the first one is clear:

Quickly, I pick up the first option. Then comes the surprise:

The second list didn’t change at all. This is because the text on the left changed and most likely, the connection between the two of them broke. No a real stunner, but still, far from what I wanted to get.

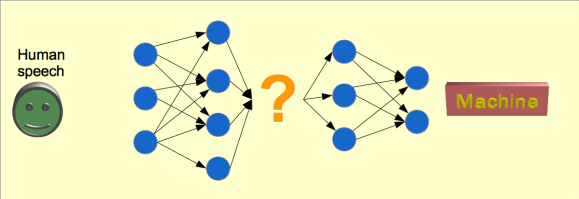

… is unexplained science

It seems that the translation worked even at the lowest of the levels, i.e. the internal navigation system of the page. The translator performed very well. Too well to be true! What I needed here was to have the lists of options with English text where applicable, keep the original boxes hidden, but functioning and operate the hidden levers as needed. I am confident this will be included in one of the future versions of Google Translate.

At last ….

Then I saw a pair of small buttons, on the top menu line:

After having clicked on the right button, magic happened. The page changed into English. The option lists continued to function.

What next

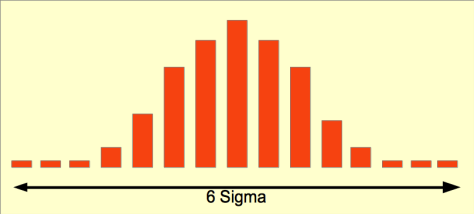

I believe the next version of the translation engines (there is more than one) should take into account the small details presented here. There are several possibilities:

- adapt to the type of the content (e.g. take care of the list boxes and the navigation system)

- detect the language change buttons and either present directly the correct version of the page

- or separate the navigation system of the page from the text content and reassemble accordingly

I am sure it is only a matter of time until the translation systems will work flawlessly. until then, pay attention to the small details, like the buttons at the top of the page.

The missing link

You can check for yourself here.

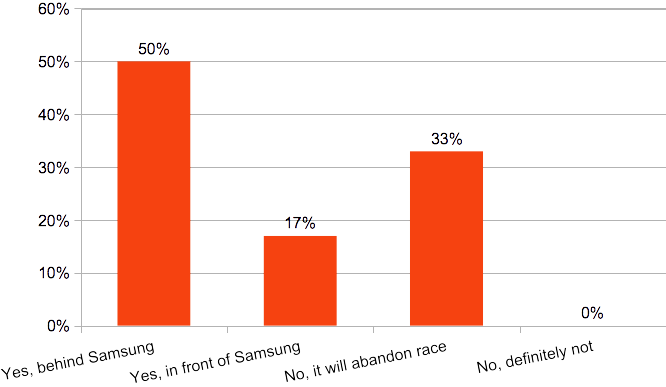

The question is why Samsung is so strong and why it is perceived as so strong. After all, the Korean giant is best known as a mass producer, not a as a pioneer. IoT is a technology based on diversity. This contrasts with mass production.

The question is why Samsung is so strong and why it is perceived as so strong. After all, the Korean giant is best known as a mass producer, not a as a pioneer. IoT is a technology based on diversity. This contrasts with mass production.